Lessons from industry pioneers building the data foundation for AI agents

Last week, Rewire hosted the third edition of the Data Leadership Roundtables. We gathered a select group of industry pioneers – from retail giants like Albert Heijn and financial leaders like Nexent Bank to biochemical innovators like Corbion – to tackle a pressing question: How do we bridge the gap between fast-moving agentic AI and the scalable rigor of Data Management?

Ontologies talk: coffee is a great way to build relations.

Bridging the gap: context as the universal language

The session opened with a thesis: agentic AI moves fast, but data management scales. The true value lies in the intersection. A shared approach to context and metadata can unify these two worlds. Bridging this gap requires three strategic shifts:

- Investing in new capabilities: Prioritizing Knowledge Graphs and Model Context Protocol (MCP) integration for Data Products.

- Powering AI with trust: Leveraging Data Products to provide AI agents with context built on verified, trusted metadata.

- Democratizing context: Distributing semantics across use cases and domains to ensure AI agents speak the same "business language."

The CDO perspective: data ownership at the source

A highlight of the event was a fireside Q&A with Gabriela Filip (ex-CDO at Knab). Gabriela offered a masterclass in balancing speed with integrity. Her advice: Data ownership must live where the decisions happen.

Key takeaways from her perspective included:

- Local flexibility, global alignment: Keep operational definitions flexible at the local level. Mandate "strong alignment" only for critical, cross-domain business decisions.

- The "Knowledge-First" approach: Combine business and data expertise to effectively capture knowledge, supported by roles like Information Architects and coordinated by leadership (e.g., CDO).

Gabriela Filip and Freek van Gulden sparring over data strategies

Insights from data practitioners: the three recipes for success

Following the plenary, participants broke into deep-dive roundtables covering data governance, operational ownership, and organizational change. Here are some of the "recipes" that emerged:

- Start small, deliver value first: The path to structured governance and knowledge starts by linking data governance to business value. Start with a specific use case, let governance evolve around it, and use visible wins to reduce resistance, drive adoption, and scale.

- Flexibility over rigid architecture: With AI evolving rapidly, the only certainty is that rigid "end-state" architectures will become obsolete before they are even finished. Focus instead on decoupled, modular setups. (For deeper insights, check out this blog post.)

- Connecting defensive with offensive strategies: exploit defensive data management initiatives – like fixing metadata, glossaries – to comply with regulatory compliance. Then use this as an opportunity to create a scalable knowledge foundation that provides a fly-wheel for offensive value creation.

Participants sharing the challenges and lessons learned.

The dialogue doesn't end here

The day’s discussions reaffirmed that navigating today’s Data & AI landscape isn’t just about tools - it’s also a bit about community: Together, we’re better equipped to tackle the complexities and turn challenges into opportunities.

Until the next Roundtable!

Building your data capabilities, end-to-end

Our strategies elevate the performance of your data management from marginal or tactical results to transformational successes.

Explore our data management servicesThree design principles to create a flexible, reusable data architecture that grows with your organization.

You’ve got the prerequisites in place:

- A strategic AI roadmap. Check.

- The ability to develop data products. Check.

- Leadership alignment. Check.

The real question is: How can you build a data foundation that delivers value early without creating long-term problems, such as inconsistent metrics, fragile pipelines and technical debt.

It’s tempting to design the full data foundation upfront. In practice, this often leads to over-engineering, slow delivery, and frustrated stakeholders. A more effective approach is to start from value—while making deliberate design choices that allow the foundation to scale.

Why building a data foundation is so difficult

First, let's be clear about what we mean by a data foundation: A data foundation is the strategic layer of reusable, trusted data products, governance, and architecture that breaks down silos and turns raw data into actionable information aligned with business outcomes.

This enables organizations to accelerate analytics and AI use cases by providing consistent, high-quality data that supports both current needs and future growth. (More on data management fundamentals here.)

Building a data foundation is not just a technical exercise. Yes, you need platforms, pipelines, and integrations. But the real challenge is balancing (1) business impact, (2) trust in data, and (3) long-term scalability. Most organizations struggle because they oscillate between two common approaches to data foundation strategy.

Let's review them.

Top-down vs bottom-up data foundation approaches

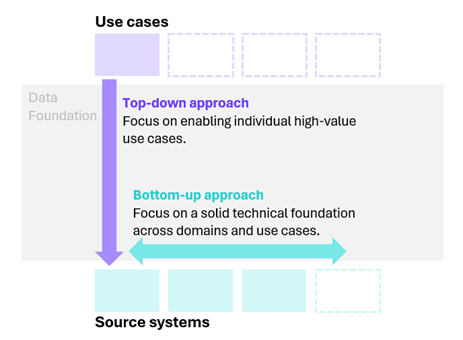

Typically, one can consider starting top-down (i.e. from a value pool perspective) or bottom-up (i.e. from a source system perspective).

1. The top-down approach

It starts by addressing a specific business problem or use case.

For example: developing a price optimization tool for your sales team by stitching together CRM data, transaction history, and external market data into a customized pipeline built solely to serve that one application.

- Pro: strong business alignment. Data products are directly tied to measurable outcomes.

- Con: risk of fragmentation. Solutions become use-case specific, difficult to reuse, and often turn into silos.

2. The bottom-up approach

It starts by building the technical foundation first.

For example: unlocking all data from your ERP and CRM systems by ingesting and modeling large volumes of operational data before concrete business use cases have been defined.

- Pro: strong reusability. Source-aligned data products improve consistency and quality.

- Con: slow time to value. It’s unclear which use cases will be enabled, delaying impact and eroding trust.

Figure 1. The top-down vs. bottom-up approach

3. The problem with either approach

Simply put, neither approach works well on its own:

- Lean too far top-down, and quick wins collapse because the underlying foundation cannot scale.

- Lean too far bottom-up, and you risk building a technically sound foundation that no one actively uses.

As a result, many organizations end up switching back and forth between these extremes, never fully unlocking the value of their data.

The solution: start top-down, but design for scale

To kickstart a data foundation effectively, we recommend a top-down, value-driven start combined with bottom-up design principles.

In practice, this means:

- Selecting priority use cases using a top-down approach, based on strategic importance and reuse potential.

- Applying scalable design principles when building data products, so today’s solution can evolve into tomorrow’s foundation.

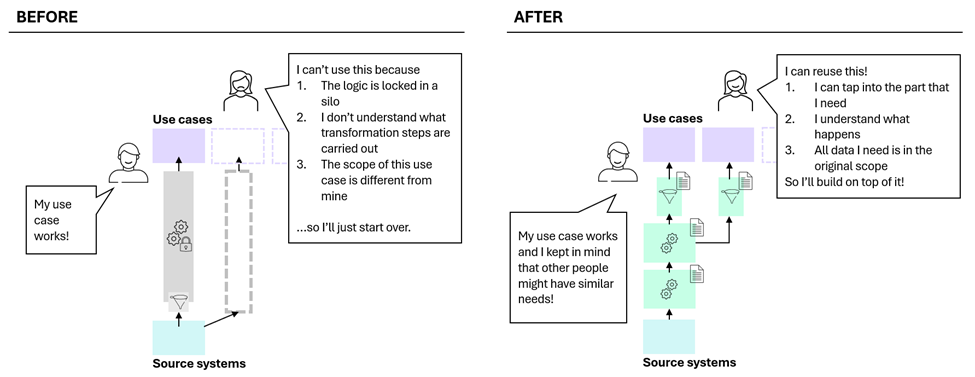

This approach allows you to demonstrate value early while avoiding the common pitfall of creating one-off data solutions. The remaining risk is that a top-down start can still lead to fragmentation if design choices are made solely in service of the first use case.

The remainder of this article focuses on concrete design principles that mitigate these top-down pitfalls, as illustrated on the left-hand side of Figure 2. These principles ensure that value-driven data products can grow into a cohesive, reusable data foundation rather than a collection of isolated solutions.

Three design principles for scalable data products

As discussed in Data as a Product: Why Just Having Data Is Not Enough, data products are more than just data or pipelines. They encapsulate business logic, definitions, and assumptions. The way this logic is implemented determines whether a data product can evolve beyond its initial use case. The following design principles help ensure a top-down start remains sustainable.

1. Decouple data transformations to enable growth

Structure data products using clearly separated source → processing → sink layers so that ingestion, transformation, and delivery are explicitly isolated. This modular setup ensures that as new use cases emerge, individual parts of the data product can be extended, split, or reused—without rearchitecting the entire pipeline.

In practice, this means:

- Clearly separating source and sink logic, including all storage interactions and integrations with upstream or downstream data products.

- Isolating individual data transformation steps into distinct, composable blocks, each with a clear responsibility and output.

Why this matters:

- Enables faster creation and evolution of data products as scope expands.

- Keeps transformation logic readable, testable, and trustworthy for consuming teams.

2. Clearly document transformation logic and scope

Explicitly document the inputs, business rules, filters, and assumptions embedded in each data product so that its meaning and boundaries are unambiguous. This documentation should evolve together with the data product as scope and logic change.

In practice, this means:

- Listing all input sources and upstream data products the logic depends on.

- Describing applied business rules and filters, including rationale where relevant.

- Defining the intended scope and known exclusions to avoid misinterpretation by consumers.

Why this matters:

- Creates transparency around definitions and inclusions.

- Makes data products easier to extend, validate, and adapt as requirements evolve.

3. Design outputs for reuse across domains

Instead of optimizing outputs for a single business domain, deliberately design data product outputs to support multiple consuming domains from the start. This requires making consumption explicit and turning it into concrete design input.

In practice, this means:

- Identifying all potential consuming domains that are likely to use the data product now or in the near future.

- Capturing archetype consumption patterns and translating these patterns into detailed output requirements.

- Implementing these requirements in a consumer-aligned data model, defined through entities, attributes, and relationships that reflect shared business concepts rather than domain-specific needs.

Why this matters:

- Encourages reuse across the organization, accelerating new use case development.

- Reduces future rework by aligning outputs with real consumption needs upfront, making data products easier to extend and scale.

Figure 2. Before and after - the result of starting top-down while designing for scale.

Conclusion: building a data foundation that lasts

By starting with high-value use cases and applying scalable data product design principles, you can deliver immediate business impact without compromising the future. Done well, this approach results in a data foundation that grows organically from real business value rather than abstract architecture.

In short: don’t try to solve everything upfront—and don’t chase only quick wins either. The key to building a strong data foundation is delivering value today while deliberately designing for tomorrow.

This article was written by Bram van Koppen, Senior Data Engineer, and Phebe Langens, Data Scientist at Rewire.

Building your data capabilities, end-to-end

Our strategies elevate the performance of your data management from marginal or tactical results to transformational successes.

Explore our data management servicesHow semantic misalignment across teams and domains holds back data interoperability, and what it takes to build shared understanding at scale

In our article 2025 data management trends: the future of organizing for value, we identified interoperability as one of the three trends shaping how organizations create value with data in 2025.

But why is interoperability rising to the top of the agenda?

Primarily, data and AI use cases are moving from single-domain problems to cross-domain questions, driven by both offensive (e.g., data-driven strategies, cross-domain AI, data monetization) and defensive (e.g., CSRD and other external reporting requirements) data strategies. Take banking, for example: assessing customer lifetime value might require transaction data from finance, interaction logs from customer service, and risk profiles from compliance. Answering these high-impact, cross-domain questions requires more than just access to each domain’s systems – it demands bringing together data across departments, with consistent meaning and context. That’s where interoperability becomes essential.

We see two main challenges:

- A complex, diverse technology landscape makes it difficult to connect data

- Inconsistent semantics, or a lack of shared definitions, which make it difficult to interpret and use data across domains

While the first challenge is increasingly supported by modern approaches, the second – semantic alignment – remains highly organization-specific, and, in many cases, unresolved.

Recent trends, such as the shift toward domain-based data ownership, inspired by the introduction of the data mesh paradigm, have magnified the problem. Decentralization improves local data quality by giving teams accountability for the data they produce, but it also increases the risk of semantic misalignment between domains. ESG reporting is one example: it requires coordination across operations, HR, and finance, each using their own systems, language and metrics.

That is why modern data and AI use cases demand true data interoperability across the organization – not just technical connection, but a shared understanding. But how do you achieve that? To answer this, we first need to break interoperability down into its key components.

Breaking down data interoperability – and why semantic chaos is often overlooked

Before solving data interoperability, organizations need to recognize the different ways data alignment can break down across domains. Data, like language, is a structured representation of the real world – designed to convey meaning, but always shaped by context. And just as human communication can run into friction across languages or cultures, data integration can break down across systems or domains. These misalignments tend to fall into three categories.

- Technical interoperability: can systems connect at all? To communicate with someone abroad, your devices first need to connect; your phones must be online and your messaging apps must be compatible. In data, different technologies (like databases, APIs or platforms) must be able to establish a connection and exchange information, say, between a relational database and a data lake.

- Syntactic interoperability: can systems interpret the structure of the data? Once you’re connected, you need to use a structure both sides can follow. A French speaker and a Japanese speaker might switch to English to make the conversation possible. In data, this means aligning on formats, schemas (data structure definitions) and naming conventions. For example, one system might label a field client_id while another uses customer_number.

- Semantic interoperability: do systems agree on the meaning of the data? Even when speaking the same language, meaning can still diverge. Take the word football: it means different things in Europe and the US. The term is shared, but the concept isn’t. In data, the same happens when teams use terms like customer or revenue but define them differently depending on their context. Without a shared understanding, the data may appear integrated – but in practice, it creates confusion and leads to misinterpretation.

Of the three interoperability categories, semantic interoperability is the hardest to solve – and yet the most critical when working across domains. Unlike technical and syntactic issues, which are increasingly addressed with modern integration platforms and tooling, semantic alignment remains dependent on shared understanding. It requires agreement on what data actually means across systems, teams and business contexts – which is exactly what makes it so difficult to solve in practice.

There is no single owner of definitions; meaning is distributed across domains. Misalignment often remains invisible until something goes wrong. And it’s not just a technical issue – resolving semantics requires input from the business, coordinated processes, and a mature approach to governance. To illustrate, consider these two real-life examples:

- In our work with a global retail organization, two systems both used the term active customer. On the surface, the data appeared to represent the same concept, but one team had it defined as a customer who logged in recently, while another had it defined as someone who had made a purchase in the past quarter. When combining these data sources without understanding the differences, this results in misleading insights.

- In another client case – a digital automotive retailer – issues arose during financial forecasting when the finance team relied on the commerce team’s definition of stock. While the commercial team defined stock as cars currently available for sale, the finance team was looking for all vehicles on the balance sheet. As a result, the financial projection was made using a fraction of the actual inventory – a semantic mismatch with material consequences.

So, even when organizations succeed in technically connecting their data – through modern platforms or federated query engines like Trino – semantic chaos often persists below the surface. In the worst case, it goes unnoticed, leading to flawed insights and strategic errors. In the best case, it’s recognized but resolving it requires extensive coordination across teams, delaying decisions and slowing down AI development.

That’s why solving semantic interoperability is so essential – and so often overlooked.

Solving semantic interoperability is a strategic investment

Once semantic interoperability is recognized as a critical challenge, the key question becomes: How do you align meaning across the organization?

In practice, we see two common approaches for organizations to manage meaning across teams and domains:

- The first is built on SLA-based standards (which define what data are delivered, how they are structured and how they should be used), where data-producing teams define agreements about how their data is produced, documented and intended to be consumed.

- The second is ontology-based modeling, which introduces a shared, formal (graph-based) model to represent the core business concepts and how they relate, creating a common language for data. Given the goal of cross-domain value creation, only concepts that are reused or interpreted across domains need to be modeled, allowing teams to participate flexibly where needed.

While ontologies require more upfront effort and governance, they provide a strong foundation for reusability, AI enablement and enterprise-wide consistency. For example, they can be used to create structured business context for GenAI agents, which helps address one of the most common fears in enterprise AI adoption: agents making decisions without the right context. A well-designed ontology enables AI systems to reason over business terms, relationships, and constraints – making outputs more aligned, explainable, and trustworthy. (See our blog post on RAG and knowledge graphs for a deeper dive into how ontologies support AI context and semantic reasoning.) Ontologies also open the door to fine-grained access management. By specifying policies on the shared semantic model – rather than hard-coded on files or specific data – organizations can define access controls based on business concepts, roles, or relationships, making it easier to adapt to changing compliance needs or organizational structures.

Solving semantic interoperability, whether through standards or ontologies, starts with understanding that this is not just a technical task. It’s a strategic investment:

- Establishing standards or ontologies takes time and expertise.

- Governance must be driven by value in data consumption, not just technical consistency.

- Leadership must make deliberate choices about which definitions matter, where alignment is needed, and how that alignment will be enforced across the organization.

The takeaway: solve your semantics to enable interoperability at scale

Ultimately, addressing semantic interoperability is a critical step in solving the broader interoperability challenge. Technical connectivity and structural alignment are foundational, but without shared meaning, data remains fragmented, misunderstood, or misused. As data and AI use cases increasingly span teams, systems, and domains, organizations that succeed will be those that treat interoperability, especially semantic alignment, as a capability to actively build, govern, and scale.

What’s next?

In our next post, we will take a closer look at ontology-based modeling in practice, including a real-world example and practical tips for getting started. For now, ask yourself the question: How does your organization define and manage the language your data speaks?

This article was written by Marnix Fetter, data scientist, and Eva Scherders, data & AI engineer at Rewire.

Building your data capabilities, end-to-end

Our strategies elevate the performance of your data management from marginal or tactical results to transformational successes.

Explore our data management services