It takes a few principles to turn data into a valuable, trustworthy, and scalable asset.

Imagine a runner, Amelia, who finishes her run every morning eager to pick up a nutritious drink from her favorite smoothie bar, "Running Smoothie”, at the corner of the street. But there’s a problem: Running Smoothie has no menu, no information about ingredients or allergens, and no standards for cleanliness or freshness. Even worse, some drinks should be restricted based on age — like a post-run mimosa — but there is no way to identify the drinks for adults only. For long time customers like Amelia, who know all products by heart, this isn’t much of an issue. But new customers, like Bessie, find the experience confusing and unpleasant, often deciding not to return.

Sounds strange, right? Yet, this is exactly how many organizations treat their data.

This scenario parallels the typical struggles organizations face in data management. Data pipelines can successfully transfer information from one system to another, but this alone doesn’t make the data findable, usable, or reliable for decision-making. Take a Sales team processing orders, for instance. That same data could be highly valuable for Finance, but it won’t deliver any value if the Finance team isn’t even aware the pipeline exists. This highlights a broader issue: simply moving data from point A to B falls short of a successful data exchange strategy.

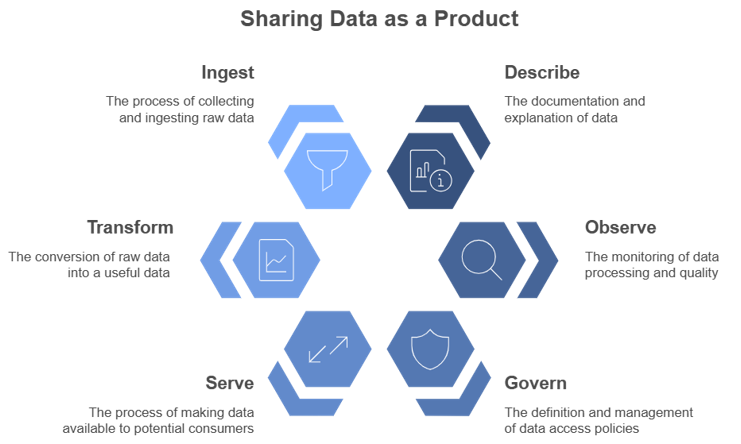

Effectively sharing Data as a Product requires sharing described, observable and governed data to ensure a smooth and scalable data exchange.

In response to this, leading organizations are embracing data as a product — a shift in mindset from viewing data as an output, to treating data as a strategic asset for value creation. Transforming data into a strategic asset requires attention to three core principles:

- Describe: ensure users can quickly find and understand the data they need, just as a well-labeled menu listing ingredients and allergens helps Bessie know what she can order.

- Observe: share data quality and reliability over time, both expected standards and unexpected deviations — like information on produce freshness and flagging when customers must wait longer than usual for their drink.

- Govern: manage who can access specific data so only authorized individuals can interact with sensitive information, similar to restricting alcoholic menu items based on an age threshold.

By embedding these foundational principles, data is not just accessible but is transformed into a dependable asset to create value organization-wide. This involves carefully designing data products with transparency and usability in mind, much like one would expect from a reputable restaurant's menu.

In this blog, we will explore why each principle is essential for an effective data exchange.

Describe: ensure data is discoverable and well-described

For data to create value, consumers need to be able to find, understand, and use it. If your team produces a dataset that’s crucial to multiple departments but remains tucked away on a platform no one knows about or is not well described and remains ambiguous, your crucial dataset might as well be invisible to potential consumers.

Findable data requires a systematic approach to metadata: think of it as the digital “menu listing” of data that helps others locate and understand it. Key metadata elements include the data schema, ownership details, data models, and business definitions. By embedding these in, for example, a data catalog, data producers help their consumers not only discover data but also interpret it accurately for their specific needs.

Observe: monitor data quality and performance

The next step is to share data quality—consumers need to know that what they are accessing is reliable. Data lacking quality standards leave users guessing whether the data is recent, consistent, and complete. Without transparency, consumers might hesitate to rely on data or worse, make flawed decisions based on outdated or erroneous information.

By defining and sharing clear standards around data quality and availability —such as timeliness, completeness, and accuracy— you enable consumers to determine if the data meets their needs. Providing observability into performance metrics, such as publishing data update frequency or tracking issues over time, allows users to trust the data and promotes data quality accountability.

Govern: manage data access and security

Finally, a successful data product strategy is built on well-managed data access. While data should ideally be accessible to any team or individual who can create value from it, data sensitivity and compliance requirements must be taken into account. Yet, locking all data behind rigid policies slows down collaboration and might lead teams to take risky shortcuts.

A well-considered access policy strikes the right balance between accessibility and security. This involves categorizing data access levels based on potential use cases, and establishing clear guidelines on who can view, modify, or distribute data. Effectively managed access not only safeguards sensitive information but also builds trust among data producers, who can rest assured their data is treated confidentially. Meanwhile, consumers can access and use data confidently, without friction or fear of misuse.

Sounds easy, but the devil is in the details

For many, these foundational principles may seem straightforward. Yet, we often see companies fall into the trap of relying solely on technology to solve their Data & AI challenges, neglecting to apply these principles holistically. This tech-first approach often results in poor adoption and missed opportunities due to a lack of focus on organizational context and value delivery.

Take data catalogs, for example — essential tools for data discoverability. While it may seem like a simple matter of choosing the right tool, driving real change requires a comprehensive approach that incorporates best practices from the Playbook to Scalable Data Management. Without them, companies face long-term risks where:

- Due to a lack of standards the catalog features data duplication, inconsistent definitions, no clear or recursively looping data lineage, and so on. For consumers of the data, this makes it difficult to navigate eroding its usefulness.

- Due to a lack of requirements the catalog is helpful for some teams, but useless for others, inviting proliferation of alternative tools further complicating data access and reducing overall adoption.

This illustrates the fact that something as fundamental as a data catalog isn’t just a technological fix. Instead, it requires a coordinated, cross-functional effort that aligns with business priorities and data strategy: it is not about implementing the right tool, but about implementing the tool the right way.

Conclusion: Data as a Product, not just Data

In the end, successfully sharing data across an organization is about more than just setting up access points and handing over datasets. It demands a holistic approach to data discoverability, observability, and governance suited to your organization. By embedding these principles, organizations can overcome common pitfalls in data sharing and set up a robust foundation that turns data into a true organizational asset. It’s not only a strategic shift in data management but also a cultural one that lays the foundation for scalable, data-driven growth.

This article was written by Femke van Engen, Data Scientist, Simon Beets, Data & AI engineer, and Freek Gulden, Lead Data Engineer at Rewire.

Jonathan Dijkslag on growing and maintaining the company's edge in biotechnology and life sciences

In the latest episode of our podcast, Jonathan Dijkslag, Global Manager of Data Insights & Data Innovation at Enza Zaden, one of the world’s leading vegetable breeding companies sits down with Ties Carbo, Principal at Rewire. With over 2,000 employees and operations in 30 countries, Enza Zaden has built its success on innovation, from pioneering biotechnology in the 1980s to embracing the transformative power of Data & AI today.

Jonathan shares insights into the company’s data-driven journey, the challenges of integrating traditional expertise with modern technology, and how cultivating a culture of trust, speed, and adaptability is shaping Enza Zaden’s future. Tune in to discover how this Dutch leader is using data to revolutionize vegetable breeding and stay ahead in a competitive, innovation-driven industry.

Watch the full interview

The transcript below has been edited for clarity and length.

Ties Carbo: Thank you very much for joining this interview. Can you please introduce yourself?

Jonathan Dijkslag: I work at Enza Zaden as the global manager of Data Insights and Innovation. My mission is to make Enza Zaden the most powerful vegetable-breeding company using data.

"It's about how to bring new skills, new knowledge to people, and combine it all together to deliver real impact."

Jonathan Dijkslag

Ties Carbo: Can you tell us a little bit about your experience on working with Data & AI?

Jonathan Dijkslag: What made Enza very successful is its breeding expertise. We are working in a product-driven market and we are really good at it. Enza grew over time thanks to the expertise of our people. In the 80s, biotech came with it. And I think we were quite successful in adapting to new technologies.

Now we are running into an era where data is crucial. So I think that data is at the heart of our journey. We are a company that values our expertise. But also we are really aware of the fact that expertise comes with knowledge, and with having the right people able to leverage that knowledge in such a way that you make impact. And that's also how we look at this journey. So it's about how to bring new skills, new knowledge to people, and combine it all together to deliver real impact.

Ties Carbo: It sounds like you're in a new step of a broader journey that started decades ago. And now, of course, Data & AI is a bit more prevalent. Can you tell us a little bit more about some successes that you're having on the Data & AI front?

Jonathan Dijkslag: There are so many, but I think the biggest success of Enza is that with everything we do, we are a conscious of our choices. Over the past three to five years, we have spent quite some money on the foundation for success. So where some companies are quite stressed out because of legacy systems, we do very well in starting up the right initiatives to create solid foundations and then take the time to finalize them. And I think that is very powerful within Enza. So over the past years we have built new skills based on scalable platforms in such a way that we can bring real concrete value in almost all fields of expertise in the company.

Ties Carbo: Can you maybe give a few examples of some challenges you encountered along the way?

Jonathan Dijkslag: I think the biggest challenge is that the devil is in the details. That's not only from a data journey perspective, but also in our [functional] expertise. So the real success of Enza with data depends on really high, mature [functional] expertise combined with very high, mature expertise in data. And bridging functional experts with data experts is the biggest challenge. It has always been the biggest challenge. But for a company like Enza, it's particularly complex.

Another complexity is we have quite some people who for decades have used data heavily in their daily work. Yet the way we use data nowadays is different than compared to decades ago. So the challenge is how to value the contributions made today and at the same time challenge people to take the next step with all the opportunities we see nowadays.

"We learned the hard way that if you don't understand – or don't want to understand what our purpose is as a company, then you will probably not be successful."

Jonathan Dijkslag

Ties Carbo: I was a bit intrigued by your first response – bridging the functional expertise with the Data & AI expertise. What's your secret to doing that?

Jonathan Dijkslag: I think it really comes back to understanding the culture of your company. When I joined the company, I knew that it would take time to understand how things work today. I needed to show my respect to everything that has brought so much success to the company in order to add something to the formula.

Every company has a different culture. Within Enza, the culture is pretty much about being Dutch. Being very direct, clear, result-driven, don't complain. Just show that you have something to add. And that's what we try to do. And learn, learn and adapt. For instance, in the war on talent, we made some mistakes. We thought maybe business expertise is not always that important. But we learned the hard way that if you don't understand – or don't want to understand what our purpose is as a company, then you will probably not be successful. And that means something to the pace and the people you can attract to the company. So it’s all about understanding the existing culture, and acting on it. And sometimes that means that you need more time than you’d like to have the right people or have the right projects finalized and create the impact you want.

"We have to create a mechanism whereby we're not too busy. We have to create a change model where you can again and again adapt to new opportunities."

Jonathan Dijkslag

Ties Carbo: What advice would you give to other companies that embark on a journey like this?

Jonathan Dijkslag: This is the new normal. The complexity is the new normal. So we have to think about how we can bring every day, every week, every month, every year more and more change. And people tend to say “I'm busy, we’re busy, wait, we have to prioritize.” I think we have to rethink that model. We have to create a mechanism whereby we're not too busy. We have to create a change model where you can again and again adapt to new opportunities. And I think this is all about creating great examples. So sometimes it’s better to make fast decisions that afterwards you would rate with a seven or an eight, but you did it fast. You were very clear and you made sure that the teams work with this decision that’s rated seven, rather than thinking for a long period about the best business case or the best ROI. So I think the speed of decision-making close to the area impact. I think that that's the secret.

People talk a lot about agility. I think for me, the most important part of agility is the autonomy to operate. Combined with very focused teams and super fast decision-making and the obligation to show what you did.

Ties Carbo: How is it to work with Rewire?

Jonathan Dijkslag: For me, it's very important to work with people who are committed. I have a high level of responsibility. I like to have some autonomy as well. And to balance those things, I think it's very important to be result-driven and also to show commitment in everything you do. And what we try to do in our collaboration with Rewire is to create a commitment to results. Not only on paper, but instead both parties taking ownership of success. And we measure it concretely. That's the magic. We're really in it together and there's equality in the partnership. That's how it feels for me. So I think that that's the difference.

We work a lot with genetic data. So our challenges are very specific. Life science brings some complexity with it. And we were looking for a partner who can to help us develop the maturity to understand and work with confidence with the corresponding data. And thanks to their experience in the field of life science, they showed that they understand this as well. And that's very important because trust is not easily created. And if you show that you brought some successes in the world of life science - including genetic data - that helps to get the trust, and step into realistic cases.

Ties Carbo: Thank you.

About the authors

Jonathan Dijkslag is the Global Manager of Data Insights & Data Innovation at Enza Zaden, where he drives impactful data strategies and innovation in one of the world's leading vegetable breeding companies. With over 15 years of experience spanning data-driven transformation, business insights, and organizational change, Jon has held leadership roles at Pon Automotive, where he spearheaded transitions to data-driven decision-making and centralized business analytics functions. Passionate about aligning technology, culture, and strategic goals, Jon is dedicated to creating tangible business impact through data.

Ties Carbo is Principal at Rewire.

Allard de Boer on scaling data literacy, overcoming challenges, and building strong partnerships at Adevinta - owner of Marktplaats, Leboncoin, Infojobs and more.

In this podcast, Allard de Boer, Director of Analytics at Adevinta (a leading online classifieds which includes brands like Marktplaats, Leboncoin, mobile.de, and many more) sits down with Rewire Partner Arje Sanders to explore how the company transformed its decision-making process from intuition-based to data-driven. Allard shares insights on the challenges of scaling data and analytics across Adevinta’s diverse portfolio of brands.

Watch the full interview

The transcript below has been edited for clarity and length.

Arje Sanders: Can you please introduce yourself and tell us something about your role?

Allard de Boer: I am the director of analytics at Adevinta. Adevinta is a holding company that owns multiple classifieds brands like Marktplaats in the Netherlands, Mobile.de in Germany, Leboncoin in France, etc. My role is to scale the data and analytics across the different portfolio businesses.

Arje Sanders: How did you first hear about Rewire?

Allard de Boer: I got introduced to Rewire [then called MIcompany] six, seven years ago. We started working on the Analytics academy for Marktplaats and have worked together since.

We were talking about things like statistical significance, but actually only a few people knew what that actually meant. So we saw that there are limits to our capabilities within the organization.

Allard de Boer

Arje Sanders: Can you tell me a little about the challenges that you were facing and wanted to resolve in that collaboration? What were your team challenges?

Allard de Boer: Marktplaats is a technology company. We have enormous technology, we have a lot of data. Every solution that employees want to create has a foundation in technology. We had a lot of questions around data and analytics and every time people threw more technology at it. That ran into limitations because the people operating the technology were scarce. So we needed to scale in a different way.

What we needed to do is get more people involved in our data and analytics efforts and make sure that it was a foundational capability throughout the organization. This is when we started thinking about how to scale specific use cases further. See how we can take what we have, but then make it common throughout the whole organization and then scale specific use cases further.

For example, one problem is that we did a lot of A/B testing and experimentation. Any new feature on Marktplaats was tested through A/B testing. It was evaluated on how it performed on customer journeys, and how it performed on company revenue. This was so successful that we wanted to do more experiments. But then we ran into the limitations of how many people can look at the experimentation, and how many people understand what they're actually looking at.

We were talking about things like statistical significance, but actually only a few people knew what that actually meant. So we saw that there are limits to our capabilities within the organization. This is where we started looking for a partner that can help us to scale employee education to raise the level of literacy within our organization. That's how we came up with Rewire.

We were an organization where a lot of gut feeling decision-making was done. We then shifted to data-driven decision-making and instantly saw acceleration in our performance.

Allard de Boer

Arje Sanders: That sounds quite complex because I assume you're talking about different groups of people with different capability levels, but also over different countries. How did you approach the challenge?

Allard de Boer: Growth came very naturally to us because we had a very good model. Marktplaats was growing very quickly in the Netherlands. Then after a while, growth started to flatten out a bit. We needed to rethink how we run the organization. We moved towards customer journeys and understanding customer needs.

Understanding those needs is difficult because we're a virtual company. Seeing what customers do, understanding what they need is something we need to track digitally. This is why the data and analytics is so vital for us as a company. If you have a physical store, you can see how people move around. If your store is only online, the data and analytics is your only corridor to understanding what the customer needs are.

When we did this change at Marktplaats, people understood instantly that the data should be leading when we make decisions. We were an organization where a lot of gut feeling decision-making was done. We then shifted to data-driven decision-making and instantly saw acceleration in our performance.

Arje Sanders: I like that move from gut feeling-based decision-making to data-driven decision-making. Can you think of a moment when you thought that this is really a decision made based on data, and it's really different than what was done before?

Allard de Boer: One of the concepts we introduced was holistic testing. Any problem we solve on Marktplaats is like a Rubik's Cube. You solve one side, but then all the other sides get messed up. For example we introduced a new advertising position on our platform, and it performed really well on revenue. However, the customers really hated it. When only looking at revenue, we thought this is going well. However, if you looked at how customers liked it, you saw that over time the uptake would diminish because customer satisfaction would go down. This is an example of where we looked at performance from all angles and were able to scale this further.

After training people to know where to find the data, what the KPIs mean, how to use them, what are good practices, what are bad practices, the consumption of the data really went up, and so did the quality of the decisions.

Allard de Boer

Arje Sanders: You mentioned that in this entire transformation to become more data-driven, you needed to do something about people's capabilities. How did you approach that?

Allard de Boer: We had great sponsorship from the top. We had a visionary leader in the leadership team. Because data is so important to us as a company, everybody from the receptionist to the CEO, needs to have at least a profound understanding of the data. Within specific areas, of course, we want to go deeper and much more specific. But everybody needs to have access to the data, understand what they're looking at, and understand how to interpret the data.

Because we have so many data points and so many KPIs, you could always find a KPI that would go up. If you only focus on that one but not look at the other KPIs, you would not have the full view of what is actually happening. After training people to know where to find the data, what the KPIs mean, how to use them, what are good practices, what are bad practices, the consumption of the data really went up, and so did the quality of the decisions.

Arje Sanders: You said that you had support from the top. How important is that component?

Allard de Boer: For me, it’s vital. There's a lot of things you can do bottom up. This is where much of the innovation or the early starting of new ideas happen. However, when the leadership starts leaning in, that’s when things accelerate. Just to give you an example, I was responsible for implementing certain data governance topics a few years back. It took me months to get things on the roadmap, and up to a year to get things solved. Then we shifted the company to focus on impact and everybody had to measure their impact. Leadership started leaning in and I could get things solved within months or even weeks.

Arje Sanders: You've been collaborating with Rewire on the educational program. What do you like most about that collaboration, about this analytics university that we've developed together?

Allard de Boer: There are multiple things that really stand out for me. One is the quality of the people. The Rewire people are the best talent in the market. Also, when developing this in-house analytics academy, I was able to work with the best people within Rewire. It really helped to set up a high-quality program that instantly went well. It's also a company that oozes energy when you come in. When I go into your office, I'm always welcome. The drinks are always available at the end of the week. I think this also shows that it is a company of people working well together.

Arje Sanders: What I like a lot in those partnerships is that it comes from two sides. Not every partnership flourishes, but those that flourish, those are the ones that also open up to a real partnership. Once you have that, then you really see an accelerated collaboration. I like that a lot about that, working together.

Allard de Boer: Yes. Rewire is not just a vendor for us. You guys have been a partner for us from the start. I've always shared this as a collaborative effort. We could have never done this without Rewire. I think this is also why it's such a strong partnership over the many years.

About the authors

Allard de Boer is the Director of Global Data & Analytics at Adevinta, where he drives the scaling of data and analytics across a diverse portfolio of leading classifieds brands such as Marktplaats, Mobile.de, and Leboncoin. With over a decade of experience in data strategy, business transformation, and analytics leadership, Allard has held prominent roles, including Global Head of Analytics at eBay Classifieds Group. He is passionate about fostering data literacy, building scalable data solutions, and enabling data-driven decision-making to support business growth and innovation.

Arje Sanders is Partner at Rewire.

Demystifying the enablers and principles of scalable data management.

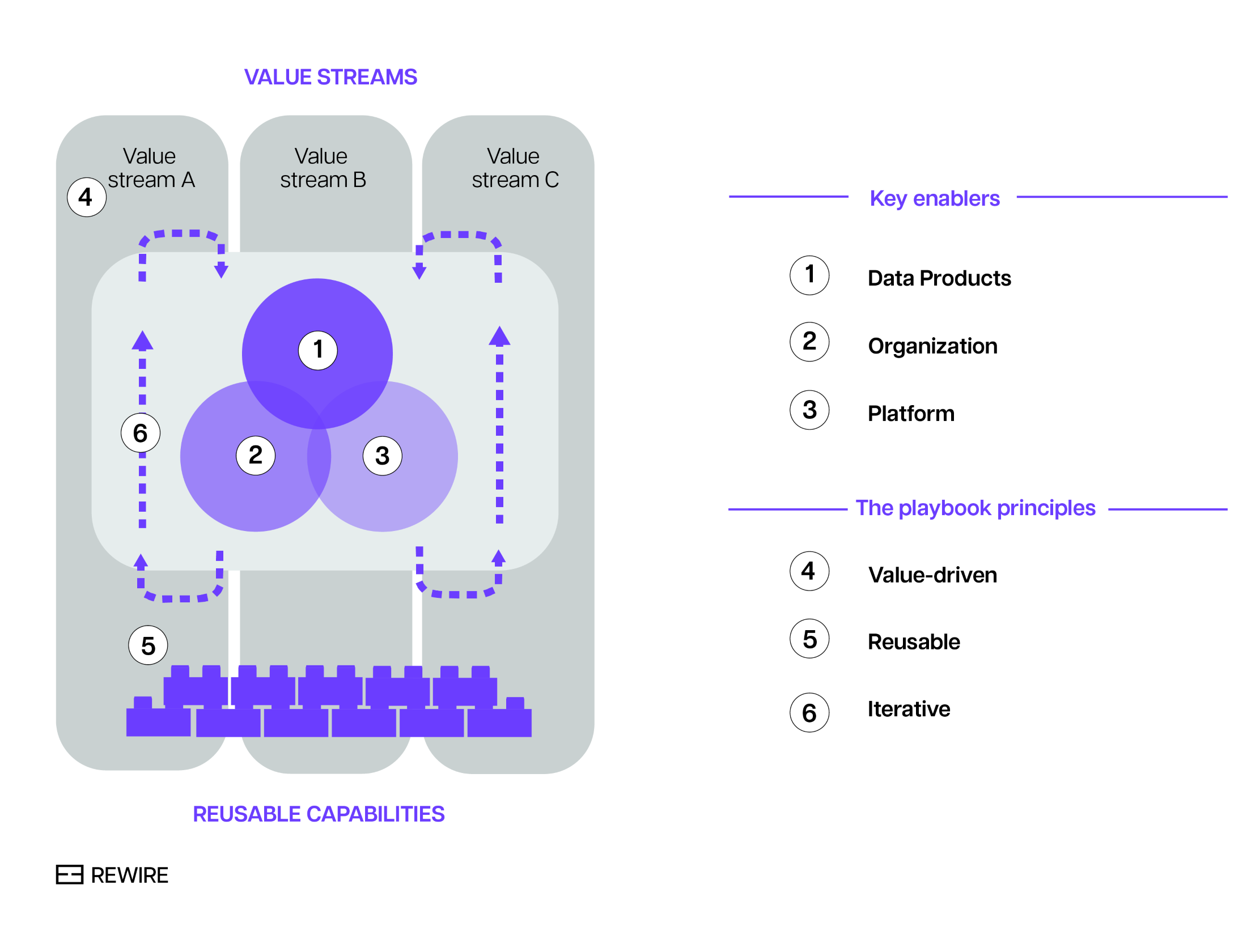

In the first instalment of our series of articles on scalable data management, we saw that companies that master the art of data management consider three enablers: (1) data products, (2) organizations, and (3) platforms. In addition, throughout the entire data management transformation, they follow three principles: value-driven, reusable, and iterative. The process is shown in the chart below.

Exhibit 1. The playbook for successful scalable data management.

Now let’s dive deeper into the enablers and principles of scalable data management.

Enabler #1: data products

Best practice dictates that data should be treated not just as an output, but as a strategic asset for value creation with a comprehensive suite of components: metadata, contract, quality specs, and so on. This means approaching data as a product, and focusing on quality and the needs of customers.

There are many things to consider, but the most important questions concern the characteristics of the data sources and consumption patterns. Specifically:

- What is the structure of the data? Is there a high degree of commonality in data types, formats, schemas, velocities? How could these commonalities be exploited to create scalability?

- How is the data consumed? Is there a pattern? Is it possible to standardize the format of output ports?

- How do data structure and data consumption considerations translate into reusable code components to create and use data products faster over time?

Enabler #2: organization

This mainly concerns the structure of data domains and clarifying the scope of their ownership (more below). This translates into organizational choices such as whether data experts are deployed centrally or decentrally. Determining factors include data and AI ambitions, use case complexity, data management maturity, and the ability to attract, develop, and retain data talent. To that end, leading companies consider the following:

- What is the right granularity and topology of the data domains?

- What is the scope of ownership in these domains? Does the ownership merely cover definitions, and does it (still) rely on a central team for implementation or have domains real end-to-end ownership over data products?

- Given choices on these points, what does it mean for how to distribute data experts (e.g. data engineers, data platform engineers)? Is that realistic given the size or ability to attract and develop talent or should choices be reconsidered?

Enabler #3: platforms

This enabler covers technology platforms - specifically the required (data) infrastructure and services that support the creation and distribution of data products within and between domains. Organizations need to consider:

- How best to select services and building blocks to construct a platform? Should one opt for off-the-shelf solutions, proprietary (cloud-based) services, or open-source building blocks?

- How much focus on self-service is required? For instance, a high degree of decentralization typically means a greater focus on self-service within the platform and the ability of building blocks to work in a federated ways.

- What are the main privacy and security concerns and what does that mean for how security-by-design principles are incorporated into the platform?

Bringing things together: the principles of scalable data management

Although all three enablers are important on their own, the full value of AI can only be unlocked by leaders who prudently balance them throughout the whole data management transformation. For example, too much focus on platform development typically leads to organizations that struggle to create value as data (or rather, its value to the business) has been overlooked. On the other hand, too data-centric companies often struggle with scaling as they haven’t arranged the required governance, people, skills and platforms to remain in control of large scale data organizations.

In short, how the key enablers are combined is as important as the enablers on their own. Hence the importance of developing a playbook that spells out how to bring things together. It begins with value, and balances the demands on data, organization and platform to create reusable capabilities that drive scalability in iterative, incremental steps. This emphasis on (1) value, (2) reusability and (3) iterative approach lies at the heart of what companies who lead in the field of scalable data management do.

Let’s review each of these principles.

Principle #1: value, from the start

The aim is to avoid two common pitfalls: the first is starting a data management transformation without a clear perspective on value. The second is failing to demonstrate value early in the transformation. (Data management transformation projects can last for years, and failing to demonstrate value early in the process erodes the momentum and political capital.) Instead of focusing on many small initiatives, it is essential to prioritize the most valuable use cases. The crucial – and arguably the hard bit – is to consider not only the impact and feasibility of individual use cases but also the synergies between them.

Principle #2: reusable capabilities

Here the emphasis is on collecting, formalizing and standardizing the capabilities from core use cases. Then, re-use them for other use cases, thereby achieving scalability. Reusable capabilities encompass people capabilities, methodologies, standards and blueprints. Think about data product blueprints that include standards for data contracts, minimum requirements on meta data and data quality, standards on outputs and inputs, as well as methods on how to organize ownership, development, and deployment.

Principle #3: building iteratively

Successful data transformation progress iteratively towards their ultimate objectives, with each step being the optimal iteration in light of future iterations. Usually this requires (1) assessing the data needs of the highest-value use cases and developing data products that address these needs. Then, (2) considering where it impacts the organization and taking steps towards the new operating model. The key here is to identify the most essential platform components. Since they typically have long lead times, it's important to mitigate gaps through pragmatic solutions - for example ensuring that technical teams assist non-technical end users, or temporarily implementing manual processes.

Unlocking the full value of data

Data transformations are notoriously costly and time consuming. But it doesn't have to be that way: the decoupled, decentralized nature of modern technologies and data management practices allow for a gradual, iterative, but also targeted approach to change. When done right, this approach to data transformation provides enormous opportunities for organizations to leapfrog their competitors and create the data foundation for boundless ROI.

In this first of a series of articles, we discuss the gap between the theory and practice of scalable data management.

Fifteen trillion dollars. That’s the impact of AI by 2030 on global GDP according to PwC. Yet MIT research shows that, while over 90% of large organizations have adopted AI, only 1 in 10 report significant value creation. (Take the test to see how your organization compares here.) Granted, these numbers are probably to be taken with a grain of salt. But even if these numbers are only directionally correct, it’s clear that while the potential from AI is enormous, unlocking it is a challenge.

Enters data management.

Data management is the foundation for successful AI deployment. It ensures that the data driving AI models is as effective, reliable, and secure as possible. It is also a rapidly evolving field: traditional approaches, based on centralized teams and monolithic architectures, no longer suffice in a world of exploding data. In response to that, innovative ideas have emerged, such as data mesh, data fabric, and so on. They promise scalable data production and consumption, and the elimination of bottlenecks in the data value chain. The fundamental idea is to distribute resources across the organization and enable people to create their own solutions. Wrap this with an enterprise data distribution mechanism, and voilà: scalable data management! Power to the people!

A fully federated model is not the end goal. The end goal is scalability, and the degree of decentralization is secondary.

Tamara Kloek, Principal at Rewire, Data & AI Transformation Practice Area.

There is one problem however. The theoretical concepts are well known, but they fall short in practice. That’s because there are too many degrees of freedom when implementing them. Moreover, a fully federated model is not always the end goal. The end goal is scalability, and the degree of decentralization is secondary. So to capitalize on the scalability promise, one must navigate these degrees of freedom carefully, which is far from trivial. Ideally, there would be a playbook with unambiguous guidelines to determine the optimal answers, and explanations on how to apply them in practice.

So how do we get there? Before answering this question, let’s take a step back and review the context.

Data management: then and now

In the 2000s, when many organizations undertook their digital transformation, data was used and stored in transactional systems. For rudimentary analytical purposes, such as basic business intelligence, operational data was extracted into centralized data warehouses by a centralized team of what we now call data engineers.

This setup no longer works. What has changed? Demand, supply and data complexity. All three have surged, largely driven by the ongoing expansion of connected devices. Estimates vary by source, but by 2025 the number of connected (IoT) devices is projected to be between 30 to 50bn globally. This trend creates new opportunities and reduces the gap between operational and analytical data: analytics and AI are being integrated into operational systems, using operational data to train prediction models. And vice versa: AI models generate predictions to steer and optimize operational processes. The boundary between analytical and operational data becomes blurred, and requires a reset on how and where data is managed. Lastly, privacy and security standards are ever increasing, not least driven by new a geopolitical context and business models that require data sharing.

Organizations that have been slow to adapt to these trends are feeling the pain. Typically they experience:

- Slow use-case development, missing data, data being trapped in systems that are impossible to navigate, or bottlenecks due to centralized data access;

- Difficulties in scaling proofs-of-concepts because of legacy systems or poorly defined business processes;

- Lack of interoperability due to siloed data and technology stacks;

- Vulnerable data pipelines, with long resolution times if they break, as endless point-to-point connections were created in an attempt to bypass the central bottlenecks;

- Rising costs as they patch their existing system by adding people or technology solutions, instead of undertaking a fundamental redesign;

- Security and privacy issues, because they lack end-to-end observability and security-by-design principles.

The list of problems is endless.

New paradigms but few practical answers

About five years ago, new data management paradigms emerged to provide solutions. They are based on the notion of decentralized (or federated) data handling, and aim to facilitate scalability by eliminating the bottlenecks that occur in centralized approaches. The main idea is to introduce decentralized data domains. Each domain takes ownership of its data by publishing data products, with emphasis on quality and ease of use. This makes data accessible, usable, and trustworthy for the whole organization.

Domains need to own their data. Self-serve data platforms allow domains to easily create and share their data products in a uniform manner. Access to essential data infrastructure is democratized, and, as data integration across different domains is a common requirement, a federated governance model is defined. This model aims to ensure interoperability of data published by different domains.

In sum, the concepts and theories are there. However, how you make them work in practice is neither clear, nor straightforward. Many organizations have jumped on the bandwagon of decentralization, yet they keep running into challenges. That’s because the guiding principles on data, domain ownership, platform and governance provide too many degrees of freedom. And implementing them is confusing at best, even for the most battle-hardened data engineers.

That is, until now.

Delivering on the scalable data management promise: three enablers and a playbook

Years of implementing data models at clients have taught us that the key to success lies in doing two things in parallel that touch on the “what” and “how” of scalable data management. The first step is to translate the high-level principles of scalable data management into organization-specific design choices. This process is structured around three enablers - the what of scalable data management:

- Data, where data is viewed as a product.

- Organization, which covers the definition of data domains and organizational structure.

- Platforms, which by design should be scalable, secure, and decoupled.

The second step addresses the how of scalable data management: a company-specific playbook that spells out how to bring things together. This playbook is characterized by the following principles:

- Value-driven: goal is to create value from the start, with data being the underlying enabler.

- Reusable: capabilities are designed and developed in a way that they are reusable across value streams.

- Iterative: the process of value creation balances the demands on data, organization and platform with reusable capabilities that drive scalability in iterative, incremental steps.

The interplay between the three enablers (data, organizations, platforms) and playbook principles (value-driven, reusable, and iterative) are summarised in the chart below.

Exhibit 1. The playbook for successful scalable data management.

Delivering on the promise of scalability provides enormous opportunities for organizations to leapfrog the competition. The playbook to scalable data management - designed to achieve just that - has emerged through collaborations with clients across a range of industries, from semiconductors to finance and consumer goods. In future blog posts, we discuss the finer details of its implementation and the art of building scalable data management.